Finding weighted sums of vectors

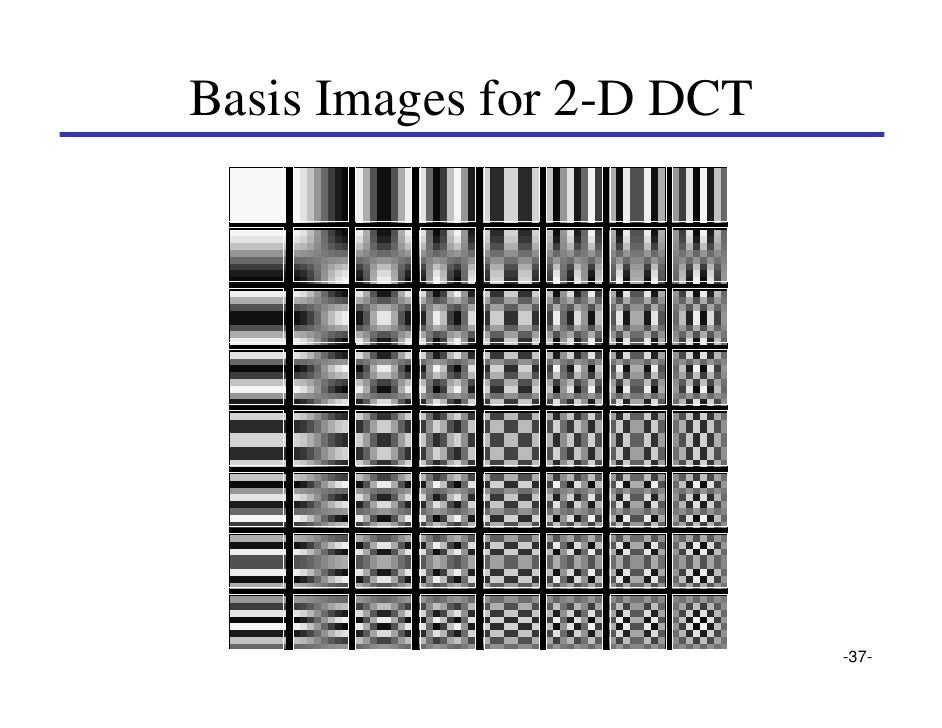

Suppose you have a vector that you want to represent as a weighted sum of other vectors. This happens to me all the time. For example, I might have a small part of an image (called a patch), and I want to have a compressed version of it, like when the image gets compressed by JPEG. In that case, the patch is represented as the sum of a bunch of simple patches that look like this:

If I have a simple vertical gradient in the patch, I could give the second basis patch in the first column a weight of 1, and all the others a weight of zero. A more complicated patch would need several of the basis patches to have weights between 0 and 1. But I would almost never need to use any patches except the ones in the upper left hand corner, unless the patch was from a picture of a checkerboard or something.

Another situation where I need to take a weighted sum of a bunch of vectors is in AI programs. Suppose you have a vector that represents an idea, like "childhood," and another vector that represents "old age." Then the vector for "adolesence" would be a sum of the two weighted more heavily toward childhood, and the vector for "adulthood" would be a sum of the two weighted more heavily towards old age.

A third time I needed this was in trying to estimate structure from motion-- trying to guess the shape of an object from the way points on the surface appeared as a camera moved around the object.

Another way of saying it is that I want to represent the vector in a new coordinate system-- a coordinate system whose axes are whatever vectors I choose. Anyway, it's a problem that's come up at least half a dozen times in my career.

If the number of independent vectors you want to sum up exactly matches the number of components in the result, then this problem can be solved by math you might have learned in high school Algebra II. You take all the vectors you want a weighted sum of (call them vec1, vec2, and vec3), and you stack them next to each other to make a matrix (at left.) then you put the goal vector on the right of the equation. The weights are the vector that makes the equation below true:

If I have a simple vertical gradient in the patch, I could give the second basis patch in the first column a weight of 1, and all the others a weight of zero. A more complicated patch would need several of the basis patches to have weights between 0 and 1. But I would almost never need to use any patches except the ones in the upper left hand corner, unless the patch was from a picture of a checkerboard or something.

Another situation where I need to take a weighted sum of a bunch of vectors is in AI programs. Suppose you have a vector that represents an idea, like "childhood," and another vector that represents "old age." Then the vector for "adolesence" would be a sum of the two weighted more heavily toward childhood, and the vector for "adulthood" would be a sum of the two weighted more heavily towards old age.

A third time I needed this was in trying to estimate structure from motion-- trying to guess the shape of an object from the way points on the surface appeared as a camera moved around the object.

Another way of saying it is that I want to represent the vector in a new coordinate system-- a coordinate system whose axes are whatever vectors I choose. Anyway, it's a problem that's come up at least half a dozen times in my career.

If the number of independent vectors you want to sum up exactly matches the number of components in the result, then this problem can be solved by math you might have learned in high school Algebra II. You take all the vectors you want a weighted sum of (call them vec1, vec2, and vec3), and you stack them next to each other to make a matrix (at left.) then you put the goal vector on the right of the equation. The weights are the vector that makes the equation below true:

vec1

|

vec2

|

vec3

|

||||

1

|

-2

|

3

|

weight1

|

19

|

||

0

|

5

|

6

|

weight2

|

-4

|

||

2

|

3

|

9

|

weight3

|

7

|

Weight1 gets applied to vertical vec1, weight2 gets applied to vec2, and so on. This is just a system of linear equations that needs to be solved, and you can do that by taking the inverse of the matrix, and multiplying that inverse by the goal, to get the weights (being careful about left and right multiplication how you do when multiplying by matrices).

The trouble is, you can only take the inverse of a square matrix. In real life, you usually have more or fewer vectors you want to sum than components in the goal vector. If you have too many then they're not going to be independent. If you have too few, the goal vector may not lie in the space spanned by the basis. In that case, you need to do the best you can: get weights so that the weighted sum is as close as possible to the goal vector, but not necessarily exactly equal to it.

Luckily, in 1920, E.H. Moore discovered the right way to do this. In 1955, Roger Penrose independently discovered the same solution, so they call it the "Moore-Penrose pseudoinverse." I don't know much about E.H. Moore, but Roger Penrose is a cool guy. He invented the Penrose aperiodic tiles (he was friends with M.C.Escher), he made up twistors which are the foundation of loop quantum gravity, and he wrote some interesting and controversial books about quantum mechanics in the brain.

I don't really know how the pseudoinverse works-- I learned how to write a program that would converge to the solution in one of my PhD classes, but I've forgotten. But it doesn't matter, you can just use it without knowing how it works. It's very fast in Matlab (it's called 'pinv'), and it gives the weights with no trouble. There's a trick you can do to get non-negative weights, if that's important for your problem (it's called 'lsqnonneg' in Matlab).

Comments